Releasing the knowledge we don’t have time to lose: collaboration between the University Museum of Zoology and Cambridge University Library

As part of the AI for Cultural Heritage Hub project (ArCH), Cambridge University Library has been collaborating with the Museum of Zoology (UMZC) to explore the use of AI to unlock its collections. In this blog post, UMZC Collections Manager Mathew Lowe explains this collaboration in more detail.

At the UMZC we hold an amazing collection of zoological specimens, archives and expertise, built up over the last 220 years from across the globe. As a snapshot of life on Earth and a record of a changing planet, our work with scientists from multiple countries and disciplines is invaluable in combating biodiversity loss, understanding evolution and inspiring future generations.

With 2.2 million specimens we have an abundance of specimen data and countless archives, yet we are short on one major thing – time. And time is absolutely of the essence. If only a small percentage of our records are available, by the time we make them available it may be too late. That bit of valuable information that could save a species or restore a habitat may come decades too late. So could AI help?

Until recently, AI to me meant infuriating chatbots when trying to get a car insurance quote, even more infuriating Excel formulae hints that didn’t work, and scary news stories about how it’s going to make us all unemployed one day. Personally, I had never encountered a positive side! However, late in 2024 I became involved in the AI for Cultural Heritage Hub project (ArCH), a cross-Cambridge initiative led by the University Library, support by funding from ai@cam. Thrown into the mix were lovely folks from other cultural heritage institutions in Cambridge, experts from the Department of Applied Mathematics and Theoretical Physics, and a group of technical and AI superstars.

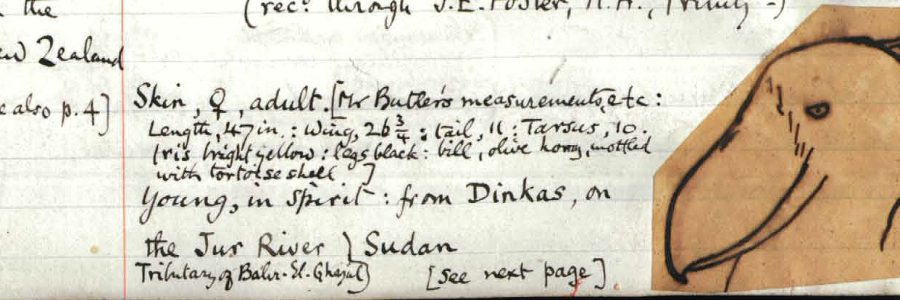

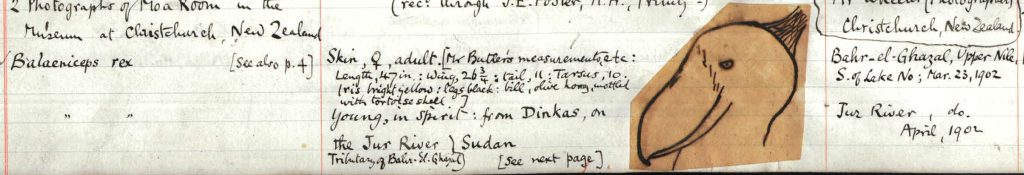

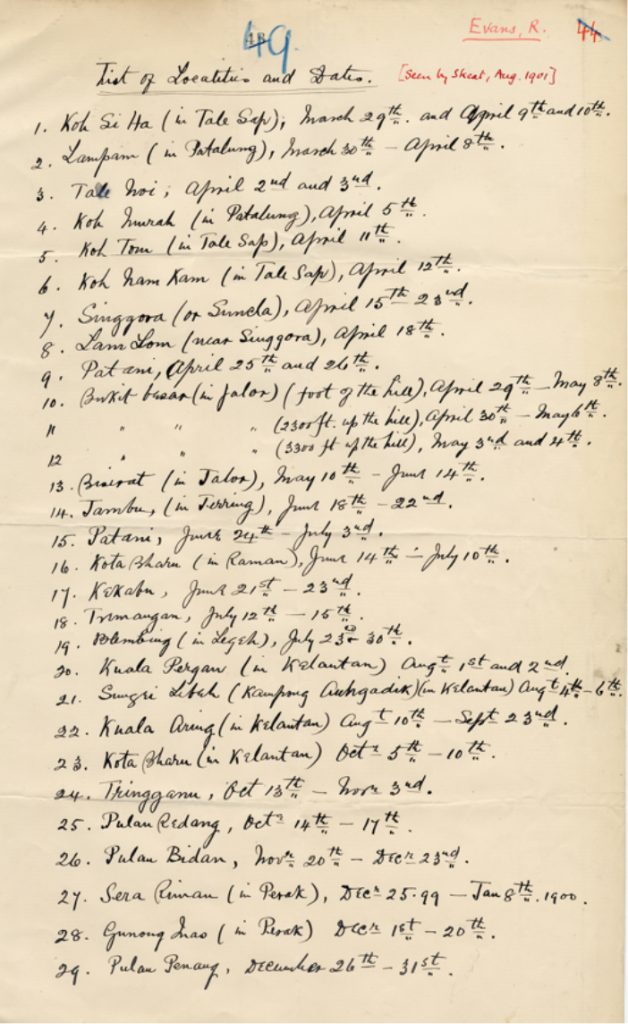

What could I provide to this wonderful fellowship? Two things actually – problems and a complete lack of understanding. In other words I was a luddite guinea pig, and if I could be trained to get the best out of AI then literally anybody could do it. On to the problems – UMZC has a collection of registers, a series of Victorian handwritten bookkeeping ledgers that record when an object was collected, where it was collected and by whom (amongst many things). Not all this data has made it into the electronic database – sometimes there are errors, or perhaps an object was long ago disposed of. None of it is easily searchable, although it has all been scanned into PDFs, but it is all valuable information.

We also have a superb collection of 2,900 historic letters, neatly bound, imaged and catalogued with brief descriptions but without being fully transcribed. Within those letters lies information about site descriptions, juicy gossip about Victorian scientists, and insights into colonial attitudes. Could AI provide the means to free up this information? To transcribe it neatly, make it searchable and do it all in the blink of an eye? Well as I said, I’m an AI technical luddite, so I had no idea. But we had to try.

Whilst I was pondering the problems and chatting to the experts, the AI hub was being set up by the University Library’s Jennie Fletcher and Tuan Pham. By May 2025, the first version of the hub was ready for testing, so we submitted some pages, drafted some command prompts and in the blink of an eye what came back was…unfortunately, a mess. Words had been mis-transcribed, some text had been made up, and as we discovered it was therefore unusable. Oh dear. As one of the experts explained to me, rather brilliantly as I have to deal with one at home, AI behaves like a four-year-old child. It wants to please, it will not only attempt to do what it’s told but will also go off on a random tangent, making up words rather than admit it can’t do it. So, for example, where a human eye reads “skulls” with a list of locations, the AI rather oddly transcribed “shells” and followed this up with a list of made-up species names in an effort to please. Obviously, this wouldn’t do.

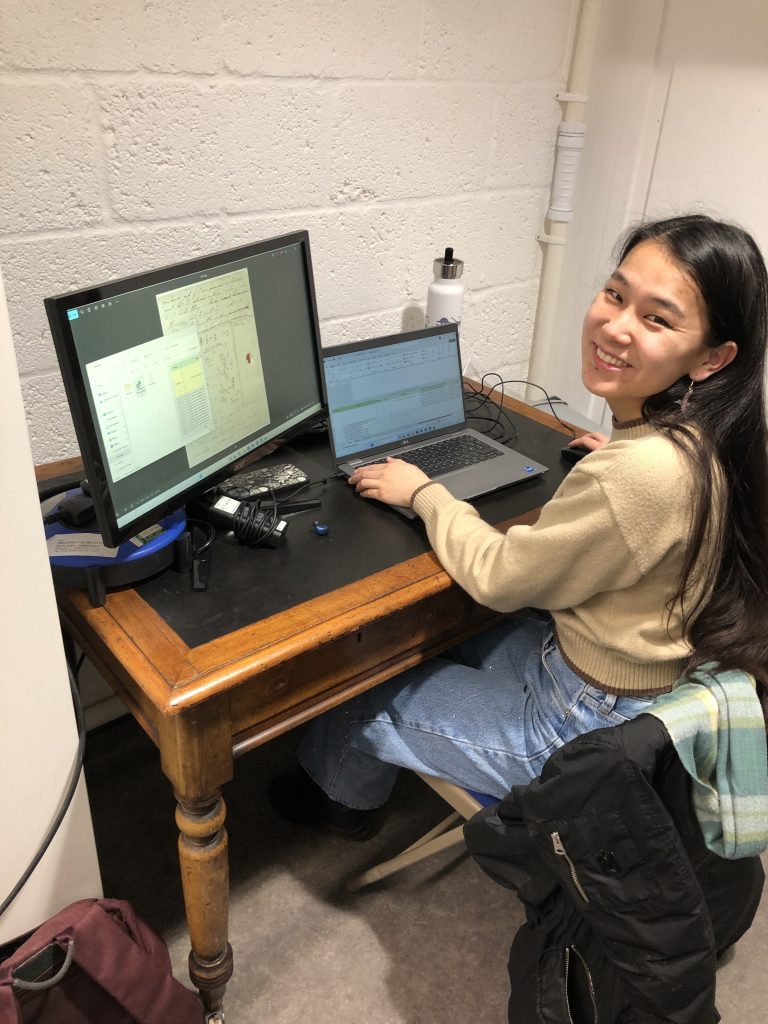

Whilst the AI experts grappled with the problem over the summer, I turned my attention to the letters. Could we use the AI hub to transcribe them? Fortunately, around the same time we had recruited a wonderful volunteer, Grace Kinney-Broderick, who whilst doing her PhD, wanted to have a go at some Museum-based projects for a couple of hours a week.

Like myself, Grace became a guinea pig for the cause, feeding the scans of the letters into the AI hub and recording what came out. What we both discovered is the lesson I think we all needed to realise with AI based tools – they are simply a tool, they are not a silver bullet. Words and letters that we struggled to read, were also a struggle for the AI. But combine the AI with the human eye and it’s akin to putting on a pair of reading glasses that you thought you didn’t need. Suddenly things do become clearer and more legible.

In addition, even when the AI made a few errors in the transcription, the time taken to correct those errors was far less than it would have taken a person to type out the letter itself. Grace and I estimate that using AI to transcribe the letters makes the process at least three to four times faster.

So a project that is in theory so large that the time taken to complete it means you’ll never attempt it suddenly becomes a lot more feasible. Little by little, Grace is now chipping away at those letters with fascinating insights into how our early collections were acquired, and how much some of those Victorian scientists disliked each other.

But what of the registers? Well, whilst we focused on letters, the University Library’s wonderful ArCH tech-wizards Amparo Gimeno-Sanjuan and Jennie Fletcher were working away in the background. Amparo was working on the prompt engineering, crafting longer and more nuanced commands than we had initially tried. This required some domain literacy, careful structuring of complex queries, anticipation of the model’s limitations such as hallucinations, precise language in the instructions, and critical evaluation of the outputs. Jennie was working all the while on the tools of the ArCH hub that made all of this possible. The result was a transcription that was around 80% accurate. In other words, we will still need the human element to check and complete data, but I now have a usable searchable transcription that guides me to the correct part of the register if it detects a keyword match.

There’s a lot of work to be done going forward, we have built up a stockpile of data to slowly check. We’re also investigating the environmental impacts of AI with small scale projects like this and advocating for this impact to be factored into future projects. But we have learned that yes, we can utilise AI, but we will (I hope) always need that people power, especially when coupled with insider knowledge that only comes with years of working with the collections. We can use these tools to make out-of-reach projects more manageable and efficient.

We’ve also learned, perhaps unsurprisingly, that collaborations across disciplines yield the best results. I still don’t understand how AI works, but I now know people who do! If you would like to continue following the work of the AI for Cultural Heritage Hub project, please join the ArCH mailing list.